The Unsolvable "Human Test"

It's Not the Bots That Will Kill the Internet, but the Bot-Killers

It’s a well-known reality of war that first-movers of new technologies and methods have an advantage on the battlefield. Nothing is ever static, and soon the enemy regroups, figuring out counterinsurgency methods. Some of these cost just as much to deploy and maintain, culminating in stalemate. Sometimes a very cheap solution is effective, rendering the new technology obsolete. There’s a push and pull in these conflicts, both trying to get the upper hand and eventually build such an overwhelming advantage the enemy is neutralized. The cycle goes into multiple layers, and oftentimes the counter to the counter is so complicated and onerous that the new weapon might as well not be used at all.

Cyber-attacks happen the same way. New hacking methods and bots are created to take advantage of new vulnerabilities as old ones are closed. Their mission is to breach a restricted space, whether it be a web site, data cloud, or protocol, and extract the value within. In the case of social media, armies of bots are designed to get into the system and find ways to steal from users, exploit engagement benefits, or spread propaganda. Social media is constantly fighting the proliferation of bots that require only a miniscule sliver of energy and never sleeps.

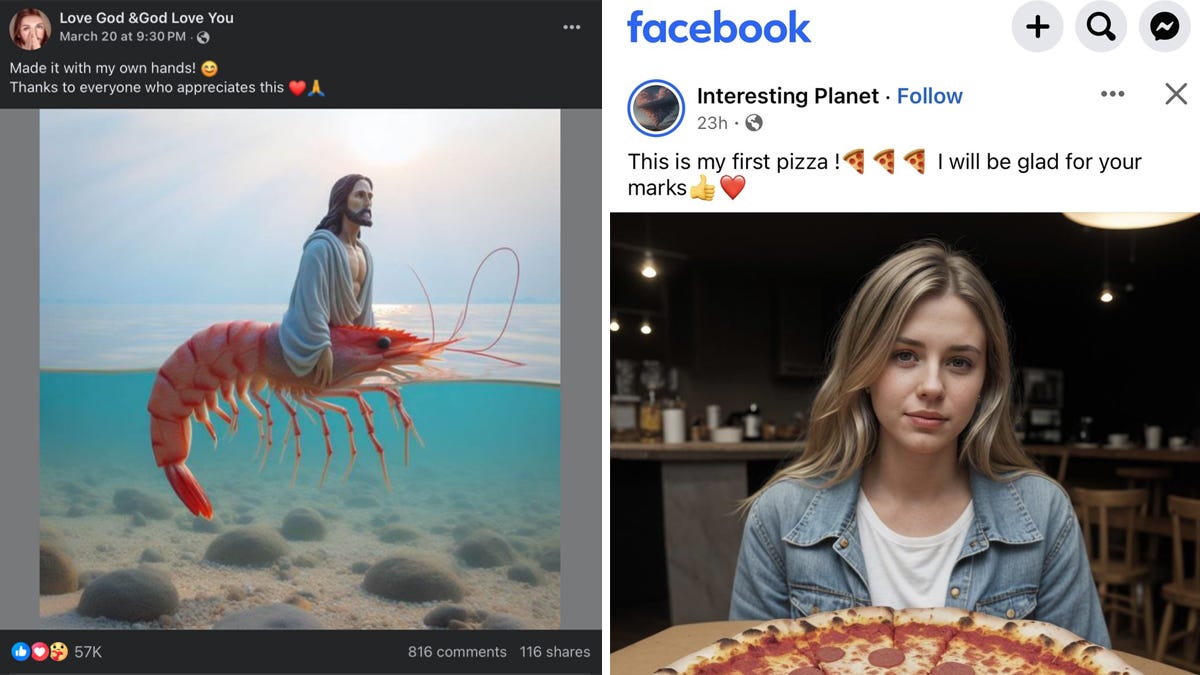

X has a significant problem with bot networks, especially with regards to faking engagement and follower counts, with many e-celebs likely getting their start through these bot-net follows. With Instagram it’s a similar story. Still, the uncontested king of bots, and its associated A.I. slop, is Facebook.

Countless videos have been made of spammed A.I. images, thousands of identical pages updating with fake images every hour, not to mention relentless messaging of the elderly trying to scam them out of money. There’s an assumption that the bot issue in Facebook is a matter of policy, that they simply don’t care. In reality they care very much and came up with incredibly complex suites of tools to detect anomalous behavior and remove suspected bots, all without a human in the loop. When trying to gatekeep and keep Facebook for humans of good standing, the question becomes “How can I tell if this is a human?”

It’s very hard to tell humanness, with the proliferation of deepfakes accelerating the problem. Software has access to the same interface as a human and can click, scan, post, and browse just like Grandpa. There are some simple ways to tell a bot, such as typing and posting faster than humanly possible, executing a multitude of commands at rapid speed, or making queries in a nonsensical way. This sort of low-hanging fruit can be thwarted with rudimentary analysis. Soon they get more complex, mimicking looking at profiles, messaging people, and making friend requests to get the overseers off the scent, then doing data harvesting between these humanlike actions. The countermeasure needs to get more advanced, the definition of bot behavior wider, and acceptable human behavior more restrained. With enough iterations of this cat and mouse game, soon bots can act like a human according to the detection algorithm, while giving countless false positives to normal people.

Nowhere is this clearer than when trying to create a Facebook account. During Covid, I destroyed my Facebook account when my post got fact-checked, not because it was wrong, but because it disagreed with the “tone”. I had enough of that shit and wiped it clean. For a current project, I was looking to buy ad space. As much as I hate it, Facebook is far superior to Google or Amazon when you want to make an advertising campaign in a specific geographic area. I decided to create a new account to throw a few bucks into marketing.

Things seemed fine. I had an unused email to create the account, put in my name and a couple profile tidbits, and started making a page for my ad. About ten minutes after creating the account, it asked for additional verification. Odd, but okay. I answered a Captcha and it then asked for a video. Annoyed, I turned on the camera and turned my head to prove I was not a bot. It stated the account was under review.

Two hours later, I received an email saying my account was suspended and there was no recourse. I doubt there was ever a human in the loop.

Annoyed, I tried another old email, which I forgot had a burner Facebook account I never used three years ago. Rejoice! I even put my phone number for verification and my credit card for extra proof this was an actual person. I went through the steps again, making a business page and navigating to my ad campaign. After a couple hours of work, it again required more identification, Again, I did the captcha and the video. I waited for the inevitable banning.

Keep reading with a 7-day free trial

Subscribe to Social Matter to keep reading this post and get 7 days of free access to the full post archives.